Code Generation and Review with AI in Complex Repositories

with Github Copilot

In this article, you'll read about meaningful code generation and review in complex repositories with AI. As an AI coding assistant, I chose GitHub Copilot, but what you'll learn is easily applicable to any other coding assistant.

My name is Vlad. I'm a software engineer at Dynatrace with degrees in AI and cybersecurity. I'm also a public speaker, and I've got great things to share.

Why?

Most of you have seen fancy demos where AI does impressive things when working from scratch, such as prototyping, toy examples, or cherry-picked cases. But what about serious engineering work in big, complex repositories?

Expectation vs Reality

If you've tried to apply AI to your daily work, you may have faced issues that led you to think it's not reliable enough yet.

The Tipping Point

I had a similar experience. But at a certain point, everything changed. Newer AI models and tools were released, and my own AI knowledge and skills grew to the point that I'm faster with AI than without it. I don't even have to compromise on quality. This was the breaking point: with sufficient guidance, AI can now tremendously aid you in your day-to-day engineering. For the last year, I've been using AI coding assistants daily, learning and iterating. Regardless of your stance on AI, it's now reliable and coherent enough that I encourage everyone to get on board and benefit from it in engineering. And don't worry: AI won't replace you, but a person who uses it well can.

What?

In this article, I'll show you exactly how to configure GitHub Copilot in your repository and get meaningful code generation and review. I'll share what I learned and provide a getting-started guide to help you create an initial set of Copilot instructions. While I use GitHub Copilot as my example, the principles defined here are transferable to any AI coding assistant. So let's jump right into it.

How?

Problem Statement

First, I want you to have a basic conceptual understanding of how AI works, based on the following simple diagram. Given a specific INPUT, the AI processes it into a specific OUTPUT:

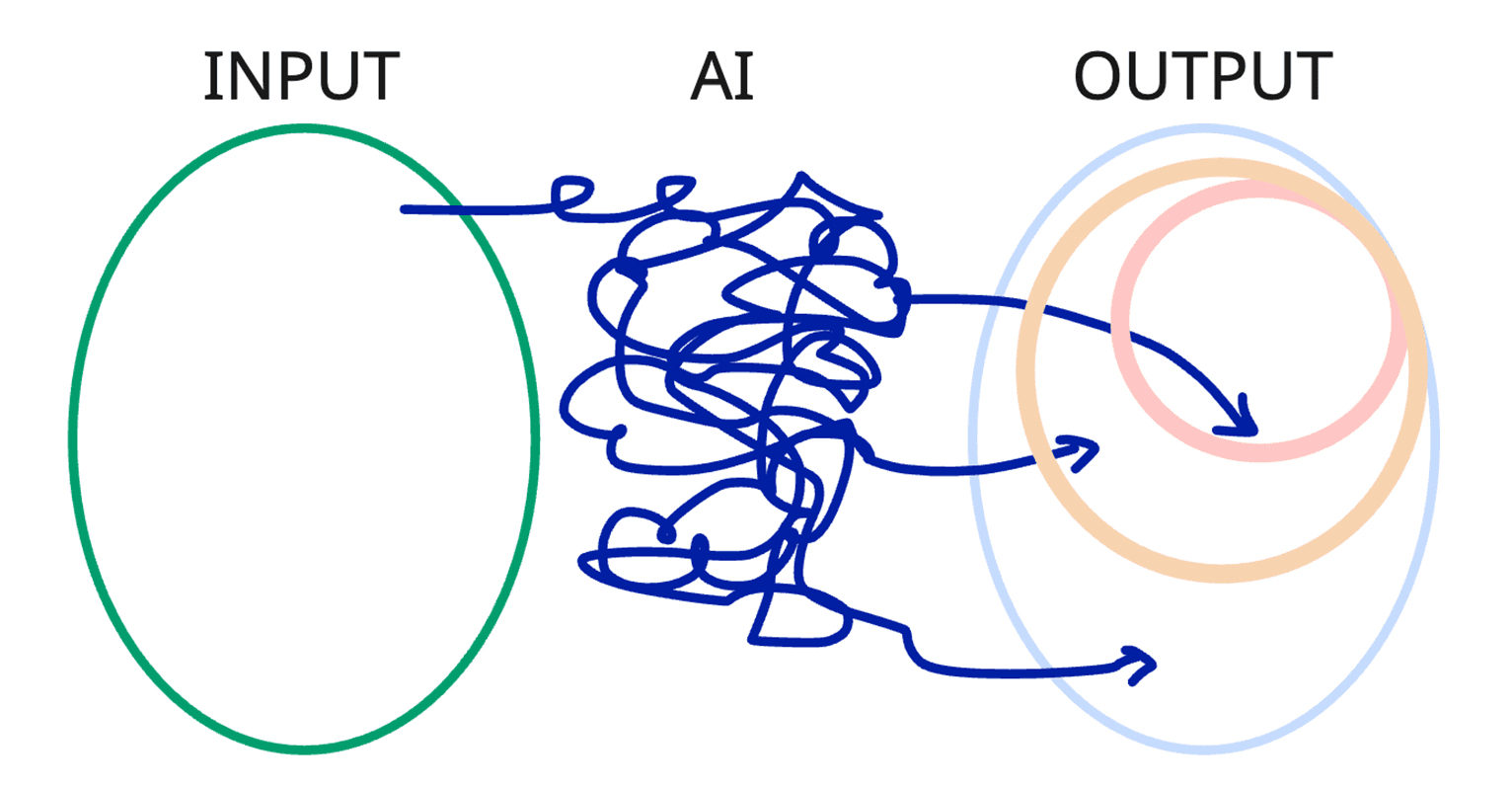

However, AI is a nondeterministic black box, so we can get different outputs for the same inputs:

And this is precisely the problem we have to address to get reliable enough output for every task we do.

While it's not possible to solve this problem completely, the next best thing we can do is shrink the output space by guiding the AI with instructions, and by doing so, we'll get a more reliable output:

In engineering, a preferred output is one that aligns with your codebase, follows established guidelines, avoids banned patterns, and so on.

Solution: Available Tools

To shrink the output space, we must guide the AI, and for that we have multiple tools.

While the complete list of tools is extensive and may look intimidating, I suggest focusing on the most deterministic tools, as our goal is to reduce the output space. The first three tools are our primary building blocks for code generation and review:

Always-on Instructions - House rules. Apply always while you're in the house (house ~ repository: tech stack, architecture, documentation standards)

File-based Instructions - Manuals for the house's appliances. Apply while you interact with a specific appliance (appliance ~ file type: component file, test file, style file, etc.)

Prompt Files (Slash Commands) - Recipes. Applied on demand (recipes ~ algorithms: PR review, a11y review, security audit)

Screen reader-friendly (text) version of the table

Tool | Think of it as… | What it does | When to use it | Why this, not another? |

Always-on Instructions | 🏠 House rules | Project-wide coding standards and conventions are automatically included in every AI interaction | Naming conventions, architecture patterns, banned libraries, and security requirements | Unlike file-based instructions, these are never conditional - they define how to behave everywhere in the repo |

File-based Instructions | 📖 Appliance manuals | Rules that activate only when the AI works on files matching a glob pattern or task description | Guide for .tsx components and use.ts hooks, test conventions for Playwright .pw-test.ts, and for .test.tsx unit tests | Unlike always-on, these activate only for matching files - keeping context lean and rules targeted |

Prompt Files (Slash Commands) | 🧑🍳 Step-by-step recipes | Reusable task templates you invoke on demand in chat via /command | Scaffolding a component, preparing a PR, running & fixing tests | Unlike instructions (passive guidance), prompts are active - you run them like a command to trigger a specific workflow |

Custom Agents | 🎭 Specialist roles | Distinct AI personas with their own tools, instructions, and model preferences | Security reviewer, planner, solution architect, a11y specialist | Unlike prompts (single task), agents change who the AI is - restricting tools and shaping behavior for an entire session |

Agent Skills | 🧰 Trade certifications | Portable capability bundles (instructions + scripts + resources) loaded on-demand | Testing workflows, deployment processes, and debugging procedures | Unlike instructions (text files), a skills directory can bundle code and examples; unlike agents (identities), skills are capabilities any agent can use. Open standard across tools |

MCP Servers | 🔌 Utility connections | Plug external APIs, databases, and services into the AI via the Model Context Protocol | Querying a database, fetching from Jira, interacting with a browser via Playwright | Unlike skills (local knowledge), MCP connects to live external systems that the AI cannot reach on its own |

Hooks | ⚡ Circuit breakers | Shell commands that execute automatically at agent lifecycle points (before/after tool use, session start/stop) | Block dangerous commands, auto-format after edits, and audit all tool invocations | Unlike everything above (guidance for the AI), hooks are deterministic code - they execute regardless of how the AI interprets your prompt |

Language Models | 🧠 Engine selection | Choose different AI models optimized for speed, reasoning depth, or specialized tasks like vision. | Fast model for quick refactors, powerful model for architecture decisions, vision model for UI work. | Not a behavioral instruction - this is about picking the right brain for the job. |

Table sources

All information in the table above is based on the official VS Code GitHub Copilot documentation:

Customize AI in VS Code - Overview & Quick Reference

When trying out custom agents, they had no measurable impact on the task of general code generation and review. Overall, custom agents are more about scoping the regular "all-knowing" agent to a perspective, with a specific set of tools and skills. It means you won't need them, but at a certain point, as your AI customization grows, you may want to define a custom coding/review agent simply to scope the tools loaded. Furthermore, as of now, GitHub actually recommends using custom instructions for code generation and review.

MCP servers can be highly effective, but it largely depends on what MCP server you use and how it's implemented. Component library or design system MCPs, for example, are very well worth your attention if you're doing frontend. Before using any MCP, please make sure it's trustworthy, as malicious MCPs do exist. Also, in most cases, when both an MCP and a CLI are available, the latter is preferable.

Hooks are a relatively new feature that recently came out of preview. They look very promising for our task of making the output space more deterministic, so I highly recommend looking into them once you've established the foundation using custom instructions and prompt files. They're especially useful for putting security measures in place, for example, to deterministically forbid reading .env files.

Lastly, the language model you select matters a lot. Generally, the latest Claude Opus is best for engineering, and the latest Gemini Pro is a good all-around option. As a rule of thumb, the higher the model's rate, the more capable it is. Keep in mind that it's wise to use a low-rate model for low-effort tasks to keep your quota usage balanced.

As of now, GitHub Copilot has request-based quota. This means that token count is irrelevant. Claude Code has token-based quota. Therefore, depending on your tools' billing model, you may need to optimize for different things - saving requests versus saving tokens.

Example setup

Unfortunately, there is no silver bullet solution; the example above didn't work even when asking nicely or threatening the AI coding agent.

Hence, we have to approach the problem as engineers. Based on my experience, here is a foundational setup I'd recommend for a complex frontend repository (~100k lines + microservices):

1 global always-on instruction - your "house rules" that apply to every file, with every prompt

N scoped instruction files - one for each distinct file type pattern you have (components, hooks, tests, styles, etc.)

1 prompt file - covering your most repetitive task (for us it's reviewing code)

.github/

├── copilot-instructions.md ← Global (always active)

├── instructions/

│ ├── components.instructions.md ← src/**/*.tsx, use*.ts

│ ├── state-management.instructions.md ← *State.ts files

│ ├── styling.instructions.md ← *.css.ts files

│ ├── unit-testing.instructions.md ← *.test.ts(x)

│ ├── e2e-testing.instructions.md ← e2e-tests/**

│ ├── integration-testing...md ← integration-tests/**

│ └── accessibility.instructions.md ← src/**/*.tsx, tests

└── prompts/

└── pr-review.prompt.md ← /pr-review slash command

The exact number and names of scoped instruction files depend on your project. The key idea is: one instruction file per distinct file type pattern. If your AI needs to behave differently when working on a component file versus a test file versus a state file, give each its own instruction file with a matching glob pattern.

Why this setup?

You may wonder:

Why this and not Custom Agents + Custom Skills?

It's a common question, and it's great if you ask it; I love when people question things. There are two main reasons to use Custom Instructions over Custom Agents + Custom Skills for Code Generation and Review.

Loading Mechanism

Skills descriptions are always in memory. Imagine having a stack of books describing a skill - all of them have a brief summary on the cover - "How to wash a cat", "How to peel a banana", and so on. The decision of which books to open and follow is fully up to the agent. This nondeterministic behavior hinders our efforts to shrink the output space.

Custom instructions are loaded on demand. Global instructions .github/copilot-instructions.md are loaded always when you're in the repository. File-based instructions, .github/instructions/<type>.instructions.md, are loaded only when the specified applyTo glob matches. Getting back to the example with skills: if you hold a cat, you'll deterministically get instructions on how to wash it; if you hold a banana, you'll deterministically get instructions on how to peel it.

By choosing Custom Instructions over Skills, we opt for a more deterministic loading mechanism, and hence we get a more reliable output. This is the most important reason.

Storing Mechanism

As you may have noticed in the examples above:

Skills are a stack of books you always carry around, while

Custom Instructions are only given when you need them

Over time, as you get more and more skills, AI context will get more and more bloated, and the nondeterministic factor will increase. Your stack of books will get heavy. Your agent will struggle with selecting a correct skill when there are dozens of them and some are even overlapping.

To somewhat mitigate the issues that come with the growth of your AI customization setup, you'd need to add Custom Agents as wrappers for a set of skills and tools.

Overall, Custom Agents + Custom Skills are much harder to maintain than a set of Custom Instructions per distinct file type, and when it comes to the general task of code generation and review, they are ultimately the wrong solution when you can use custom instructions.

Setting up your own AI Customization

Now that you know how your foundational setup should look, let's talk about how exactly you should define it. As we all work with different technologies, it makes no sense for me to show you specific code snippets or anything like that. Instead, we'll focus on principles.

Principles that make instructions effective

After several months of daily use and iteration, I've distilled what makes instructions effective into a number of principles. These are not GitHub Copilot-specific - they apply to any AI coding assistant that supports customization. And since the AI world changes every week, I designed these principles so they stay relevant over time.

Core Principle: Always Reduce Output Space

This is the core rule behind every decision you make when customizing AI. Among the many customization options available - instructions, agents, skills, prompt files, MCP servers - always select the one that reduces the output space the most.

This works both ways. When you pick a tool that constrains AI behavior tightly (like custom instructions, which are always loaded and always read), the output becomes consistent and reliable. When you pick a tool that expands it (like custom agents with custom skills, which the model may or may not follow depending on context), the result quality varies significantly - and it may not be reliable enough for daily use.

A concrete example (recap from the Why this setup? section above):

GitHub Copilot offers both custom instructions and custom agents with skills. Instructions are stored as markdown files, loaded automatically based on glob patterns, and applied deterministically - the AI reads them every time. Custom agents and skills, by contrast, define a persona and a set of capabilities, but the model follows them at its own discretion. In practice, after long and thorough A/B testing, I found that custom instructions perform better for code generation and review.

Every other principle is an application of this rule.

P2: Start with the big picture

Imagine that the AI agent is a contractor whose memory is wiped every time you assign a task. Your AI customization would serve as a persistent memory for your contractor and your agent. Any time a session starts, the agent can read your customization files to save the effort of figuring out what is going on.

Your global instruction file should open with what the project is - a one-liner on purpose, tech stack, and architecture style. Then narrow into coding standards, patterns, and anti-patterns.

Why it works: it gives the AI the same context tree you'd give a new team member during onboarding. Without the big picture, the AI has to guess the project's nature from the files it reads, and it often guesses wrong. And even when it guesses right, it may still make sense to save repeated guessing by defining a line in instructions.

P3: Keep iterating over your setup

Note: the chart is illustrative only and does not represent real measured data.

We already defined memory for the agent. Next, make sure that this memory - your AI customization - grows over time, the same way a new colleague's knowledge grows during their work. You shouldn't treat instructions as a set-once-and-forget artifact.

The first version of your instructions will be imperfect, and that's fine. What matters is that every time the AI makes a repeatable mistake, you recognize it as a signal: there's a missing rule, a vague rule, or a wrong rule. Add it, clarify it, fix it. Over weeks and months, your instructions become increasingly precise, and the AI's output becomes increasingly reliable.

This is fundamentally different from linting rules or CI checks, which are static once written. AI instructions are a living document that evolves with the codebase and the team's understanding of how to guide the model.

Another benefit of iterating is a clear progression. If you were to copy-paste a best-practice setup, it may work well, but not as well as the one tailored over time, and also not as cost-effectively, because all the redundant customization consumes tokens.

Note that it would also rob you of the little clarity you could get from A/B testing while iterating on your own setup. What you copy may work, but you'll have little to no insight into what is important and what is not.

P4: Establish a reflection process

We have established a persistent memory and a continuously growing knowledge base. Next, make sure that this memory and its quality grow and stay high. For that, we need to establish a reflection process.

Let the AI self-reflect on the mistakes it makes. When something goes wrong, let the AI analyze what happened, define actionable adjustment points, and present them to you for verification. Once you approve, the agent applies the adjustment to the instruction files directly.

This same reflective process can automate customization maintenance more broadly. Whenever the AI detects inconsistencies between the codebase and the instructions, such as a deprecated pattern that instructions still prescribe, a new convention that instructions don't mention, or redundant rules across files, it can suggest changes and apply them after your approval.

You'll see suggestions like: "Your instructions say to use pattern A, but 15 out of 16 files in the codebase use pattern B. Should I update the instructions?" Just approve the fix, and your instructions stay current.

The result is a feedback loop: the AI helps maintain its own guidance, keeping instructions aligned with reality without significant manual effort.

P5: Encode team processes, not just code patterns

Your instruction files aren't limited to code style. AI is a nondeterministic tool, so you have to assign it according to tasks. For example, let's consider the task of a review. Within your AI customization, encode things like:

Commit/changeset message format rules

PR description templates (what a good PR description includes)

Versioning conventions (how to determine patch vs. minor from the branch name)

Review checklists (code quality, security, accessibility, testing, documentation)

And for humans, leave the high-stakes, high-level tasks such as architecture, design, business logic correctness, and knowledge sharing.

These are exactly the kind of things that are tedious for reviewers and trivial for the AI to enforce, if you tell it how.

Why it works: automates the mechanical parts of review, freeing humans to focus on architecture and logic. The AI becomes a reliable first pass that catches what humans often overlook.

P6: Encourage AI to ask questions instead of assuming

Add a section telling the AI to ask clarifying questions before starting work, and to ask again whenever new uncertainties arise during work.

This saves you enormous time on prompt writing. Instead of considering every possible detail and spending 15 minutes writing a comprehensive prompt, you can write a rough one-minute ask. The AI will explore and answer all it can, and then ask questions about everything else - all within one request, so that your quota is not needlessly consumed with back-and-forth.

Why it works: reduces ambiguity without human effort upfront. The AI becomes a collaborator that surfaces the right questions rather than a tool that silently assumes. Assumptions lead to mistakes. Mistakes lead to another try, another loop, and that costs you much more than the extra time you pay for answering a couple of questions, both in tokens and engineering time.

P7: Define banned patterns, refer to golden files for approved replacements

AI models come with biases from their training data - they'll default to patterns they've seen most often, which may not be what your project uses. To counteract this, explicitly define what's banned and point to your golden files (reference implementations) as the source of approved replacements.

Don't just say don't use X. Say use Y instead of X, see golden-component.tsx for the approved pattern. A table format works well for the bans:

| Banned | Use instead |

|---|---|

| Pink/Purple/Indigo Gradients | Semantic, Brand-Specific Hex Codes |

framer-motion Overkill |

CSS Transitions or Purposeful Motion |

| Single-File Monoliths | Modular Component Architecture |

useState Hell |

Themed/Customized Components |

Note: the table is for illustrative purposes, you would have to be more specific for a real customization file to be effective.

For golden files, point to 1-2 real files per file type pattern that exemplify the established patterns. "When in doubt, follow this example." The AI can read these at any time to see how things are done in practice.

And, of course, you can also refer to golden files in the banned patterns table.

Why it works: banning alone leaves a vacuum - the AI will either ignore the ban or invent a wrong alternative. Golden files fill the vacuum with a concrete example. Together, they help the AI unlearn biased patterns and learn your project's conventions.

P8: Require self-validation before "done"

Tell AI to run typecheck, lint, and relevant tests before claiming a task is complete. Something like:

"Before stating that a task is complete, ALWAYS run validation: typecheck, lint, and unit tests for affected code. All commands must pass before considering the task done."

This one rule changes the experience more than anything else. Instead of the AI handing you broken code and saying "done," it now self-validates every time and keeps working until all checks are green.

Why it works: it shifts the first round of verification cost to the AI. You still review the output, but it arrives in a working state.

Demo

Now it's time for practice. You'll see multiple demos below. The demo videos, unfortunately, don't include commentary - for that you'd need to attend one of my live talks. However, the demos are self-explanatory, and I'll describe the overall ideas for each of them here.

How to create AI Customization files

Most modern AI coding assistants ship with prompt/command/flow helpers to guide you through creating AI customization. These commands may be invoked via / in the input field of the tool you chose or, in the case of Copilot, from the UI.

In the demo below, you'll see what your first steps with GitHub Copilot can look like, and how to create the most important piece of customization: always-on instructions.

How to create a review prompt

Next, we'll create a prompt for reviews. In this demo, you can see how I used AI to analyze the last ~500 fix PRs for the most common issues and bad patterns, and then created a command to help catch those.

Reviewing code with custom "review" command

Now we're going to use that command on the last merged commit in the immich repo. You'll see that even though the PR had approvals from humans, there are still a couple of issues, albeit minor, that were easily surfaced via our review command.

General tips on AI Agent mode

Here I show you how a good agent flow can look. Since there is no single project or stack everyone is familiar with, instead of generating code, I opted to generate an article about immich, as this is something everyone can understand. Code generation would work exactly the same.

Pay specific attention to prompt instructions regarding subagents and #askQuestions tool calls to avoid assumptions.

Also note that since this all happens within one request, I'm billed only once, regardless of how long it runs or how many tokens are consumed. Hence, in this case, where we have a per-request billing model, instructing the model to ask questions is even more beneficial.

Your next steps

Call to action

💡 Get inspired by the presented setup

📄 Use the /init command to get a template to adjust, or do it manually

♻ Iterate over the instructions continuously as you see mistakes Copilot makes

🤖 Benefit from AI-assisted code generation and review, and advance your AI skills

Reach out to me

Feel free to ask questions here, connect with me on LinkedIn, come to my next public talk, or invite me as a speaker. Let me know what you liked or disliked, and what you'd like to learn next; I have a lot to share.

My socials:

LinkedIn: https://www.linkedin.com/in/vladkrv

GitHub: https://github.com/vladkrv